Processors for Embedded Vision

THIS TECHNOLOGY CATEGORY INCLUDES ANY DEVICE THAT EXECUTES VISION ALGORITHMS OR VISION SYSTEM CONTROL SOFTWARE

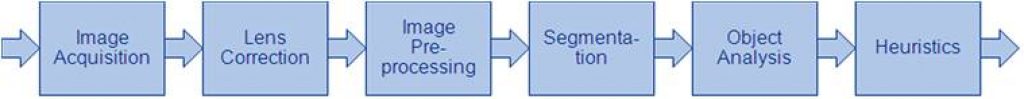

This technology category includes any device that executes vision algorithms or vision system control software. The following diagram shows a typical computer vision pipeline; processors are often optimized for the compute-intensive portions of the software workload.

The following examples represent distinctly different types of processor architectures for embedded vision, and each has advantages and trade-offs that depend on the workload. For this reason, many devices combine multiple processor types into a heterogeneous computing environment, often integrated into a single semiconductor component. In addition, a processor can be accelerated by dedicated hardware that improves performance on computer vision algorithms.

General-purpose CPUs

While computer vision algorithms can run on most general-purpose CPUs, desktop processors may not meet the design constraints of some systems. However, x86 processors and system boards can leverage the PC infrastructure for low-cost hardware and broadly-supported software development tools. Several Alliance Member companies also offer devices that integrate a RISC CPU core. A general-purpose CPU is best suited for heuristics, complex decision-making, network access, user interface, storage management, and overall control. A general purpose CPU may be paired with a vision-specialized device for better performance on pixel-level processing.

Graphics Processing Units

High-performance GPUs deliver massive amounts of parallel computing potential, and graphics processors can be used to accelerate the portions of the computer vision pipeline that perform parallel processing on pixel data. While General Purpose GPUs (GPGPUs) have primarily been used for high-performance computing (HPC), even mobile graphics processors and integrated graphics cores are gaining GPGPU capability—meeting the power constraints for a wider range of vision applications. In designs that require 3D processing in addition to embedded vision, a GPU will already be part of the system and can be used to assist a general-purpose CPU with many computer vision algorithms. Many examples exist of x86-based embedded systems with discrete GPGPUs.

Digital Signal Processors

DSPs are very efficient for processing streaming data, since the bus and memory architecture are optimized to process high-speed data as it traverses the system. This architecture makes DSPs an excellent solution for processing image pixel data as it streams from a sensor source. Many DSPs for vision have been enhanced with coprocessors that are optimized for processing video inputs and accelerating computer vision algorithms. The specialized nature of DSPs makes these devices inefficient for processing general-purpose software workloads, so DSPs are usually paired with a RISC processor to create a heterogeneous computing environment that offers the best of both worlds.

Field Programmable Gate Arrays (FPGAs)

Instead of incurring the high cost and long lead-times for a custom ASIC to accelerate computer vision systems, designers can implement an FPGA to offer a reprogrammable solution for hardware acceleration. With millions of programmable gates, hundreds of I/O pins, and compute performance in the trillions of multiply-accumulates/sec (tera-MACs), high-end FPGAs offer the potential for highest performance in a vision system. Unlike a CPU, which has to time-slice or multi-thread tasks as they compete for compute resources, an FPGA has the advantage of being able to simultaneously accelerate multiple portions of a computer vision pipeline. Since the parallel nature of FPGAs offers so much advantage for accelerating computer vision, many of the algorithms are available as optimized libraries from semiconductor vendors. These computer vision libraries also include preconfigured interface blocks for connecting to other vision devices, such as IP cameras.

Vision-Specific Processors and Cores

Application-specific standard products (ASSPs) are specialized, highly integrated chips tailored for specific applications or application sets. ASSPs may incorporate a CPU, or use a separate CPU chip. By virtue of their specialization, ASSPs for vision processing typically deliver superior cost- and energy-efficiency compared with other types of processing solutions. Among other techniques, ASSPs deliver this efficiency through the use of specialized coprocessors and accelerators. And, because ASSPs are by definition focused on a specific application, they are usually provided with extensive associated software. This same specialization, however, means that an ASSP designed for vision is typically not suitable for other applications. ASSPs’ unique architectures can also make programming them more difficult than with other kinds of processors; some ASSPs are not user-programmable.

Free Webinar Explores Processing Solutions for ADAS and Autonomous Vehicles

On July 24, 2024 at 9 am PT (noon ET), Ian Riches, Vice President of the Global Automotive Practice at TechInsights, will present the free hour webinar “Who is Winning the Battle for ADAS and Autonomous Vehicle Processing, and How Large is the Prize?,” organized by the Edge AI and Vision Alliance. Here’s the description,

Neuromorphic Computing, Memory and Sensing: Towards Exponential Growth

This market research report was originally published at the Yole Group’s website. It is reprinted here with the permission of the Yole Group. The neuromorphic market is poised for expansion from smartphones to encompass opportunities in data centers, entertainment, and automotive sectors. The combined neuromorphic sensing and computing markets are anticipated to generate from US$28

BrainChip Blog Series: Exploring the Future of Neuromorphic Computing at the Edge

This blog post was originally published at BrainChip’s website. It is reprinted here with the permission of BrainChip. According to Gartner, traditional computing technologies will hit a digital wall in 2025 and force a shift to new strategies, including those involving neuromorphic computing. With neuromorphic computing, endpoints can create a truly intelligent edge by efficiently

Shaping the Future of AI Responsibly

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. Artificial Intelligence (AI) has taken the world by storm, revolutionizing industries, and transforming the way we live and work. But as AI continues to advance, it is crucial that we prioritize responsible AI practices to ensure a

Digica and J-Squared Announce Concept-to-completion Pipeline for Edge AI Solutions at Embedded Vision Summit 2024

Edge AI services span full life-cycle development for applications across defense, medical, manufacturing, and semiconductor sectors May 17, 2024 – At the 2024 Embedded Vision Summit, global edge computing leader J-Squared Technologies and international software solutions provider Digica will announce a comprehensive solution for concept-to-completion edge AI development, empowered by the Hailo-8 AI accelerator. The joint

Free Webinar Explores Developments and Prospects in Neuromorphic Sensing and Computing

On July 11, 2024 at 9 am PT (noon ET), Adrien Sanchez and Florian Domengie, senior technology and market analysts at the Yole Group, will present the free hour webinar “The Rise of Neuromorphic Sensing and Computing: Technology Innovations, Ecosystem Evolutions and Market Trends,” organized by the Edge AI and Vision Alliance. Here’s the description,

AiM Future Brings GenAI Applications to Mainstream Consumer Devices

Seoul, Korea, and San Jose, CA – May 15, 2024 – AiM Future, a leading provider of concurrent multimodal inference accelerators for edge and endpoint devices, has just announced the launch of its next-generation Generative AI Architecture, “GAIA,” and Synabro software development kit. These GAIA-based accelerators are designed to enable energy-efficient transformers and large language

AI Decoded: New DaVinci Resolve Tools Bring RTX-accelerated Renaissance to Editors

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Latest update adds two AI-assisted tools that perform best on NVIDIA GPUs. AI tools accelerated by NVIDIA RTX have made it easier than ever to edit and work with video. Case in point: Blackmagic Design’s DaVinci Resolve

Introducing TENN: Revolutionizing Computing with an Energy Efficient Transformer Replacement

This blog post was originally published at BrainChip’s website. It is reprinted here with the permission of BrainChip. TENN, or Temporal Event-based Neural Network, is redefining the landscape of artificial intelligence by offering a highly efficient alternative to traditional transformer models. Developed by BrainChip, this technology aims to address the substantial energy and computational demands

The SHD Group, in Collaboration with the Edge AI and Vision Alliance™, to Release a Complimentary Edge AI Processor and Ecosystem Report

SAN JOSE, Calif. – May 14, 2024 – The SHD Group, a leading strategic marketing, research, and business development firm, today announced the creation of an edge AI report that will be a resource for both product developers and ecosystem providers. This guide will detail processors integrating AI accelerators, standalone acceleration chips, accelerator IP, software,

BrainChip and Frontgrade Gaisler to Augment Space-Grade Microprocessors with AI Capabilities

Laguna Hills, Calif and Göteborg, Sweden – May 6, 2024 – BrainChip Holdings Ltd (ASX: BRN, OTCQX: BRCHF, ADR: BCHPY), the world’s first commercial producer of ultra-low power, fully digital, event-based, neuromorphic AI IP, and Frontgrade Gaisler, a leading provider of space-grade system-on-chip solutions, announce their collaboration to explore the integration of BrainChip’s Akida™ neuromorphic

Avnet to Exhibit at the 2024 Embedded Vision Summit

05/09/2024 – PHOENIX – Avnet’s exhibit plans for the 2024 Embedded Vision Summit include new development kits supporting AI applications. The summit is the premier event for practical, deployable computer vision and edge AI, for product creators who want to bring visual intelligence to products. This year’s Summit will be May 21-23, in Santa Clara, California. This

Korean Semiconductor Industry Titans Back DEEPX in Series C Funding Round

SEOUL, South Korea, May 10, 2024 /PRNewswire/ — DEEPX, a prominent AI semiconductor technology startup under the leadership of CEO Lokwon Kim, is delighted to announce the successful conclusion of its Series C funding round, amassing $80.5M (KRW 110B). Led by esteemed private equity firms, this significant financial investment will accelerate the mass production of

Beyond Smart: The Rise of Generative AI Smartphones

This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. From live translations to personalized content management — the new era of mobile intelligence It’s 2024, and generative artificial intelligence (AI) is finally in people’s hands. Literally. This year’s early slate of flagship smartphone releases is a

Lattice to Showcase Advanced Edge AI Solutions at Embedded Vision Summit 2024

May 08, 2024 04:00 PM Eastern Daylight Time–HILLSBORO, Ore.–(BUSINESS WIRE)–Lattice Semiconductor (NASDAQ: LSCC), the low power programmable leader, today announced that it will showcase its latest FPGA technology at Embedded Vision Summit 2024. The Lattice booth will feature industry-leading low power, small form factor FPGAs and application-specific solutions enabling advanced embedded vision, artificial intelligence, and

BrainChip Adds Penn State to Roster of University AI Accelerators

Laguna Hills, Calif. – May 8, 2024 – BrainChip Holdings Ltd (ASX: BRN, OTCQX: BRCHF, ADR: BCHPY), the world’s first commercial producer of ultra-low power, fully digital, event-based, neuromorphic AI IP, today announced that Pennsylvania State University has joined the BrainChip University AI Accelerator Program, ensuring students have the tools and resources necessary to help

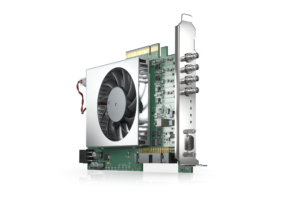

Basler Presents a New, Programmable CXP-12 Frame Grabber

With the imaFlex CXP-12 Quad, Basler AG is expanding its CXP-12 vision portfolio with a powerful, individually programmable frame grabber. Using the graphical FPGA development environment VisualApplets, application-specific image pre-processing and processing for high-end applications can be implemented on the frame grabber. Basler’s boost cameras, trigger boards, and cables combined with the card form a

BrainChip Earns Australian Patent for Improved Spiking Neural Network

Laguna Hills, Calif. – May 6, 2024 – BrainChip Holdings Ltd (ASX: BRN, OTCQX: BRCHF, ADR: BCHPY), the world’s first commercial producer of ultra-low power, fully digital, event-based, neuromorphic AI, received its latest Australian patent, further strengthening its IP portfolio related to sustainable and efficient AI technologies. Patent AU2022287647, “An Improved Spiking Neural Network,” facilitates

Gigantor Technologies Welcomes Major General Viet Luong as Executive Vice President

March 6, 2024 — Gigantor Technologies INC, a leading innovator of high-performance Edge AI systems, proudly announces the appointment of Major General (Retired) Viet Luong as the Executive Vice President. With an illustrious career spanning over three decades in the United States Army, Major General Luong brings invaluable strategic leadership and operational experience to Gigantor

Defense Business Accelerator (DBX) Challenge

Gigantor Technologies Wins $2M Contract at Defense TechConnect Innovation Summit & Expo February 13, 2024 — Gigantor Technologies, a trailblazer in cutting-edge AI solutions, emerged triumphant at the Defense TechConnect Innovation Summit & Expo in Maryland. Chief Marketing Officer Jessica Jones showcased Gigantor’s pioneering technology at the Defense Business Accelerator (DBX) Microelectronics Challenge, securing a