This blog post was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm.

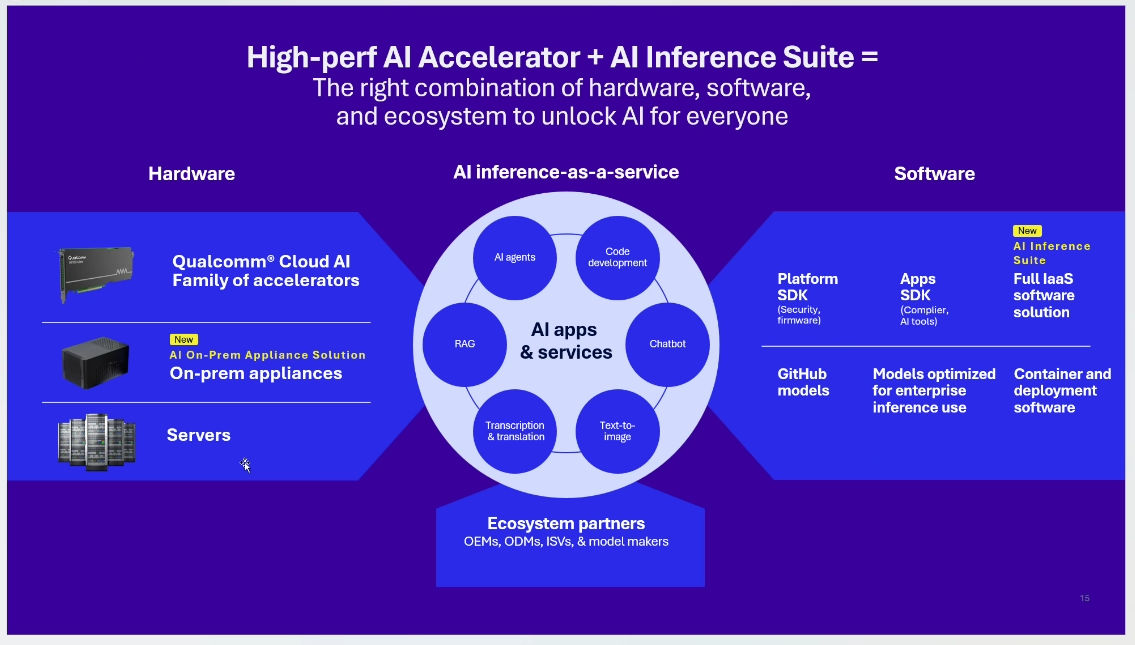

The Qualcomm Dragonwing AI On-Prem Appliance Solution pairs with the software and services in the Qualcomm AI Inference Suite for AI inference that spans from near-edge to cloud. Together, they allow your small-to-medium business, enterprise or industrial organization to run custom and off-the-shelf AI agents and applications, including generative AI workloads, on premises. Running inference on premises can lower your operational costs, ensure your data remains private, reduce your power consumption and greatly reduce latency.

Developers can use the Qualcomm Dragonwing AI On-Prem Appliance Solution and the Qualcomm AI Inference Suite for applications as varied as chatbots, in-store assistants, worker coaching, site-specific information, safety compliance and sales enablement. Also, manufacturers and designers looking for new ways to add value in on-premises AI will find this hardware-software combination ripe for development and experimentation. Ideal locations include retail stores, quick-service restaurants, shopping outlets, dealerships, hospitals, factories and shop floors.

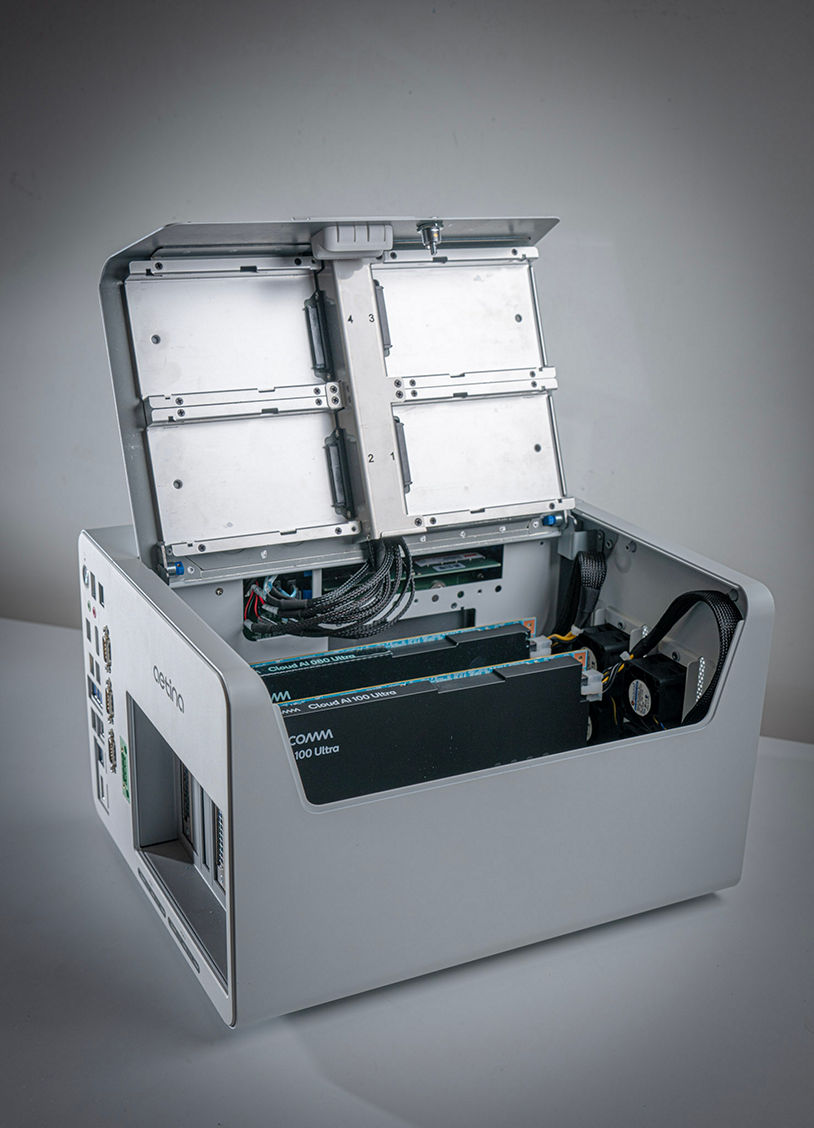

The hardware: Qualcomm Dragonwing AI On-Prem Appliance Solution

The Qualcomm Dragonwing AI On-Prem Appliance Solution is powered by the Qualcomm Cloud AI family of accelerator cards for industrial and embedded IoT.

The hardware is a plug-in solution designed to scale from a standalone, desktop product up to a wall-mounted appliance, with no need for dedicated infrastructure. It gives original equipment manufacturers (OEMs), original design manufacturers (ODMs) and system integrators (SIs) the flexibility to bring new offerings to market based on multiple configuration options:

- Basic (available now) – Ideal for AI models up to 10 billion parameters and applications using computer vision and small language models (SLMs)

- Plus (available now) – Ideal for AI models up to 30 billion parameters and applications using large language models (LLMs)

- Premier (available soon) – Ideal for models up to 70 billion parameters and LLM applications demanding high performance and accuracy

That amount and flexibility of local compute power for AI inference means that you can now keep workloads on your own premises. You can execute in house a wide range of models – both open-source and proprietary – for generative AI, natural language processing and computer vision.

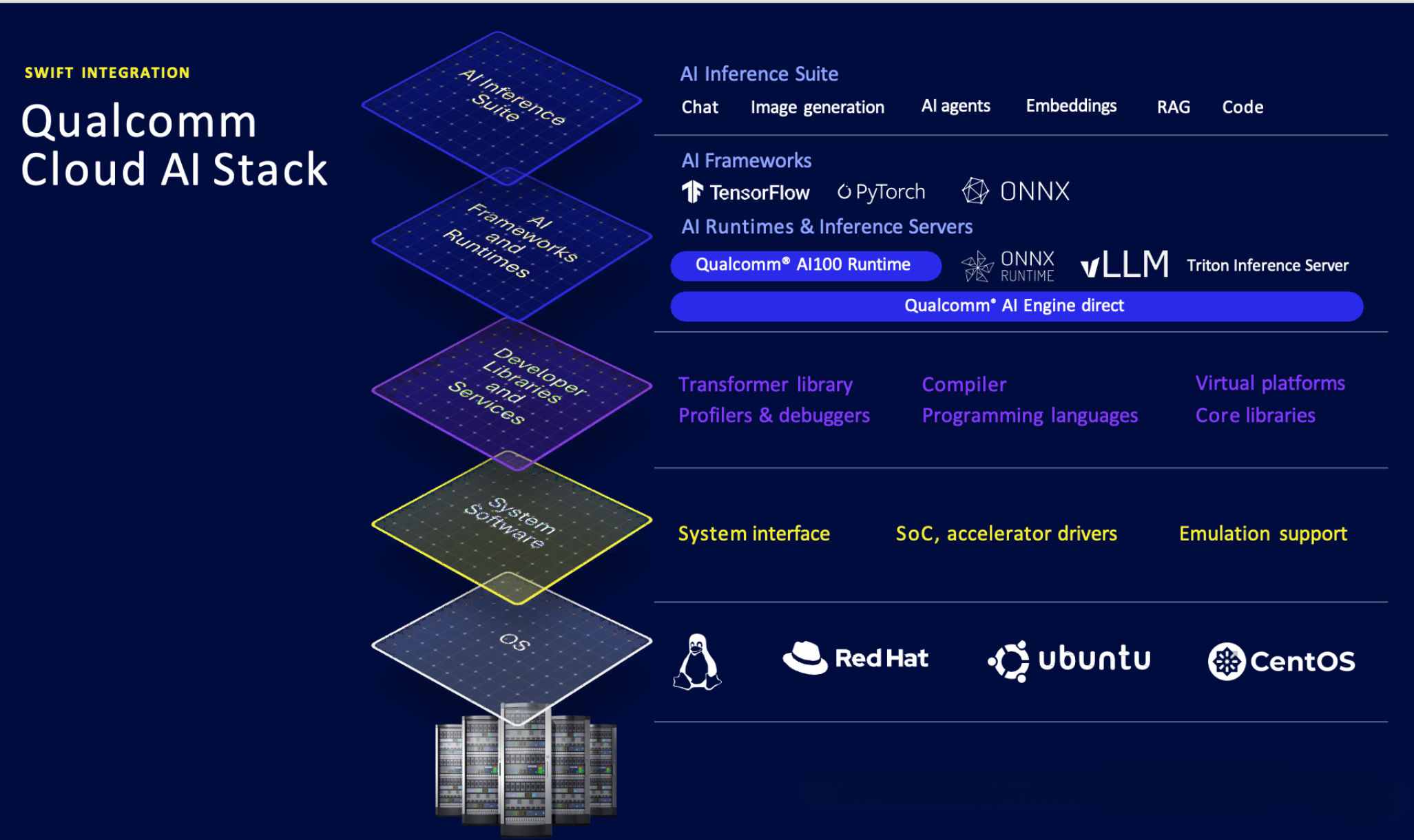

Members of Qualcomm Technologies’ ecosystem are already helping their customers with in-house deployments built on the solution. As shown below, Qualcomm Technologies provides the lower layers in the technology stack, leaving ample room for OEMs, ODMs, SIs and software vendors to add value in the upper layers.

The software: Qualcomm AI Inference Suite

The Qualcomm AI Inference Suite enables software vendors and OEMs/ODMs/SIs to develop generative AI applications on the AI On-Prem Appliance Solutions. It provides an SDK and OpenAI-compatible APIs for working with a variety of AI models.

With the Qualcomm AI Inference Suite and the Qualcomm Dragonwing AI On-Prem Appliance Solution, you can now run locally many familiar AI applications including:

- Voice agents in a box

- Chatbots with SLMs, LLMs and LMMs

- Retrieval-augmented generation (RAG) functions for intelligent, indexed search and summarization

- Custom AI assistants and agents

- Intelligent search across multiple languages

- Automated drafting and note-taking

- Image generation

- Code generation

- Camera AI to process images and video for security, worker safety and site monitoring

Easy-to-use API endpoints give you access to functions like user management and administration, chat, image generation, RAG, OpenAI API compatibility and audio/video gen AI. The suite lets you use familiar frameworks like LangChain, CrewAI and AutoGen to create AI agents. All components run as Kubernetes or bare containers, with auto-scaling when deployed over Kubernetes.

The suite includes full API documentation and tutorials to help you get your AI-enabled applications up and running quickly.

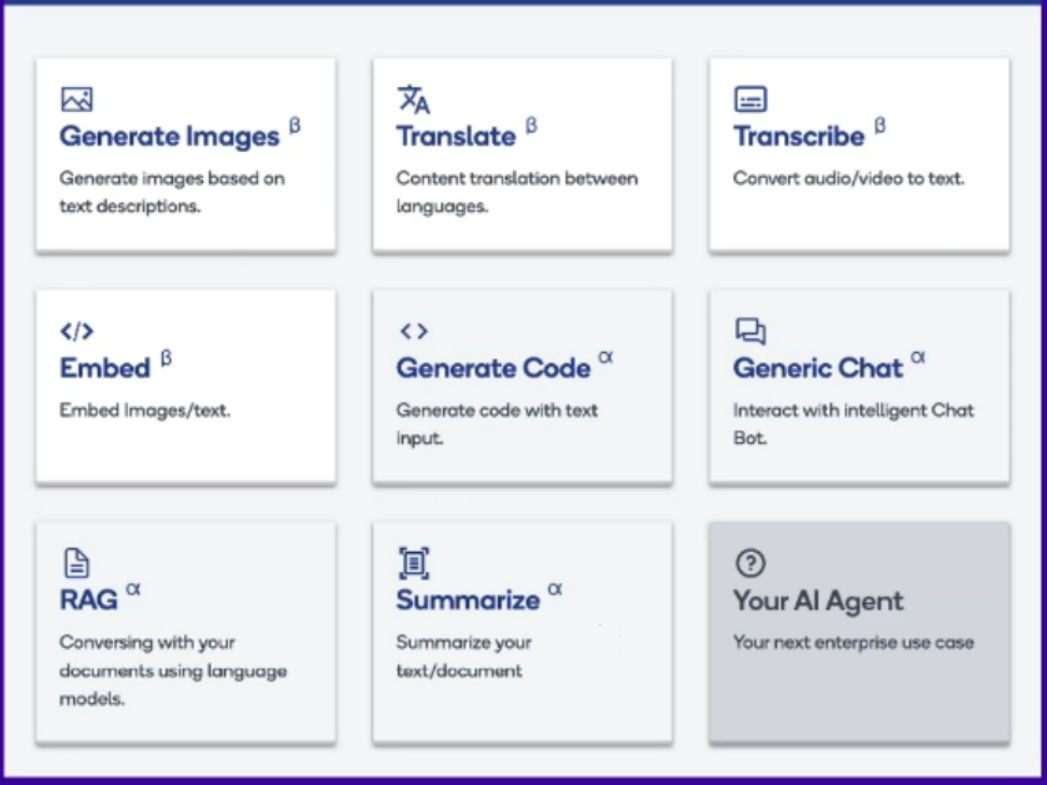

Sample apps and a playground

To get you started, we’ve made available a package of sample applications you can run on sample hardware.

On a Playground for Qualcomm Cloud AI, you can run applications from the Qualcomm AI Inference Suite directly on Qualcomm Cloud AI accelerator cards. As shown below, you can use the sample apps and API endpoints exposed in the playground for image generation, translation, transcription, embedding, code generation, generic chat, RAG and summarization.

(Note that not all of those can run at the same time.)

You can also use the tutorials and documentation included in the playground to build your own applications and AI agents from the ground up.

On the Playground for Qualcomm Cloud AI, you can run applications from the Qualcomm AI Inference Suite directly on Qualcomm Cloud AI accelerator cards – the same cards that are deployed in Qualcomm Dragonwing AI On-Prem Appliance solutions. To maximize responsiveness and performance, the playground is deployed to multiple regions worldwide.

Bring your own models with a single line of code

You can bring your own models, too. You’re not limited to the models in the playground.

With the Qualcomm Efficient Transformer Library you can onboard and deploy popular models from Hugging Face, or bring your own models with a single line of code. The library compiles and optimizes your model to run on Qualcomm Cloud AI accelerator cards and on the Qualcomm Dragonwing AI On-Prem Appliance Solution. Numerous models including text-only language models and embedding models have already been validated and added to the library.

We’ve designed our entire solution to keep you focused on creating applications and agents instead of modifying and converting your models. And we’ve designed the Qualcomm Efficient Transformer Library so that you can train anywhere and infer easily on Qualcomm Cloud AI accelerators, using a developer-centric toolchain. You provide only the model card from Hugging Face (or the path to the local model). The library transforms and optimizes your models for high performance on Qualcomm Cloud AI accelerators.

Next steps

Whether you’re a software developer focused on designing, coding and maintaining software applications or a hardware designer interested in designing, building and selling hardware, there is a place for you in our Qualcomm Dragonwing AI On-Prem Appliance Solution. Where do you fit in the diagram below?

Software vendors, model makers and developers:

Five minutes from now, you can be test-driving the Qualcomm AI Inference Suite on the Qualcomm Dragonwing AI On-Prem Appliance Solution in the playground. You can try out generative AI applications such as chat, transcription, translation and summarization with easy-to-use endpoints.

We’ve made it as frictionless as possible for you to get onto the playground with a simple “Sign in with Google” button. No credit card, no personal information, no delay. We think you’ll be impressed by the performance you see.

You can take a look at the documentation and tutorials for the suite, then visit our Developer Discord for deeper insights from our experts and real-time conversations with fellow developers.

OEMs, ODMs and SIs:

There’s room for your company in this market. You can easily build the Qualcomm Dragonwing AI On-Prem Appliance Solution and Qualcomm AI Inference Suite into the next product you offer your customers.

Several members of our hardware ecosystem are working to bring the Qualcomm Dragonwing AI On-Prem Appliance Solution to market as their commercial offering. Contact Aetina for more details on their MegaEdge AIP-FR68 AI workstation (pictured at top).

And contact our sales group to find out how you can start using our ready-made solutions to offer your customers performant, cost-effective AI inference on premises.

Robert Morrison

Director of Engineering, Qualcomm Technologies

Evgeni Gousev

Senior Director, Research and Development, Qualcomm Technologies