Applications

scroll to learn more or view by subtopic

Practical AI and computer vision technology is being developed for systems that span virtually every application, and many of these application areas will experience huge growth rates. With trendsetting products demonstrating what is possible, system designers have discovered that the suppliers of computer vision technology have removed the barriers to building practical computer vision systems—unleashing a huge wave of innovation for new products and applications.

View all Posts

Vision Language Model Prompt Engineering Guide for Image and Video Understanding

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Vision language models (VLMs) are evolving at a breakneck speed. In 2020, the first VLMs revolutionized the generative AI landscape by bringing visual understanding to large language models (LLMs) through the use of a vision encoder. These

In-cabin Sensing 2025-2035: Technologies, Opportunities, and Markets

For more information, visit https://www.idtechex.com/en/research-report/in-cabin-sensing-2025-2035-technologies-opportunities-and-markets/1077. The Yearly Market Size for In-cabin Sensors will Exceed $6B by 2035 Regulations like the Advanced Driver Distraction Warning (ADDW) and General Safety Regulation (GSR) are driving the growing importance of in-cabin sensing, particularly driver and occupancy monitoring systems. IDTechEx’s report, “In-Cabin Sensing 2025-2035: Technologies, Opportunities, Markets”, examines these regulations

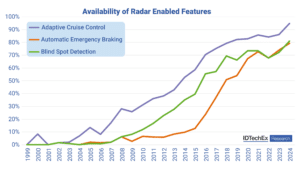

Nearly $1B Flows into Automotive Radar Startups

According to IDTechEx‘s latest report, “Automotive Radar Market 2025-2045: Robotaxis & Autonomous Cars“, newly established radar startups worldwide have raised nearly US$1.2 billion over the past 12 years; approximately US$980 million of which is predominantly directed toward the automotive sector. Through more than 40 funding rounds, these companies have driven the implementation and advancement of

New AI Model Offers Cellular-level View of Cancerous Tumors

This blog post was originally published at NVIDIA’s website. It is reprinted here with the permission of NVIDIA. Researchers studying cancer unveiled a new AI model that provides cellular-level mapping and visualizations of cancer cells, which scientists hope can shed light on how—and why—certain inter-cellular relationships triggers cancers to grow. BioTuring, a San Diego-based startup,

Top-tier ADAS Systems: Exploring Automotive Radar Technology

Radars have had a place within the automotive sector for over two decades, beginning with the first use for adaptive cruise control and many other developments taking place since. IDTechEx‘s “Automotive Radar Market 2025-2045: Robotaxis & Autonomous Cars” report explores the latest developments in radar technology within the automotive sector. ADAS safety systems ADAS (advanced

AI Disruption is Driving Innovation in On-device Inference

This article was originally published at Qualcomm’s website. It is reprinted here with the permission of Qualcomm. How the proliferation and evolution of generative models will transform the AI landscape and unlock value. The introduction of DeepSeek R1, a cutting-edge reasoning AI model, has caused ripples throughout the tech industry. That’s because its performance is on

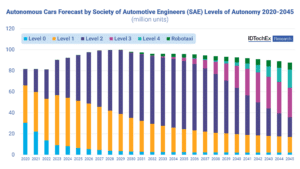

Autonomous Cars are Leveling Up: Exploring Vehicle Autonomy

When the Society of Automotive Engineers released their definitions of varying levels of automation from level 0 to level 5, it became easier to define and distinguish between the many capabilities and advancements of autonomous vehicles. Level 0 describes an older model of vehicle with no automated features, while level 5 describes a future ideal

STMicroelectronics and HighTec EDV-Systeme Collaborate for Safer Software-defined Vehicles

Where safety meets safety: ST’s Stellar MCUs certified to the highest level of risk management, ISO 26262 ASIL D, are now supported with the same safety level by HighTec’s Rust compiler Geneva, Switzerland and Saarbrücken, Germany, February 4, 2025 – STMicroelectronics (NYSE: STM), a global semiconductor leader serving customers across the spectrum of electronics applications,

Empowering Civil Construction with AI-driven Spatial Perception

This blog post was originally published at Au-Zone Technologies’ website. It is reprinted here with the permission of Au-Zone Technologies. Transforming Safety, Efficiency, and Automation in Construction Ecosystems In the rapidly evolving field of civil construction, AI-based spatial perception technologies are reshaping the way machinery operates in dynamic and unpredictable environments. These systems enable advanced

AI Chips Take Center Stage at CES

This market research report was originally published at the Yole Group’s website. It is reprinted here with the permission of the Yole Group. The 2025 edition of the world’s largest consumer electronics conference showcased the latest semiconductors for artificial intelligence applications The CES 2025 conference in Las Vegas showcased the latest semiconductor innovations, offering some